Similarity search using Ollama

This tutorial shows how you can use Ollama to generate text embeddings. It features a Node.js application that uses a locally-running LLM to generate text-embeddings. This LLM generates embeddings for news article headlines and descriptions, which are stored in a YugabyteDB database using the pgvector extension.

Prerequisites

- YugabyteDB v2025.2 or later

- Ollama

- Node.js v18+

- git-lfs

- Docker

Set up YugabyteDB

Start a 3-node YugabyteDB cluster in Docker (or feel free to use another deployment option):

rm -rf ~/yb_docker_data

mkdir ~/yb_docker_data

docker network create yb-network

docker run -d --name ybnode1 --hostname ybnode1 --net yb-network \

-p 15433:15433 -p 7001:7000 -p 9001:9000 -p 5433:5433 \

-v ~/yb_docker_data/node1:/home/yugabyte/yb_data --restart unless-stopped \

yugabytedb/yugabyte:2025.2.3.0-b149 \

bin/yugabyted start \

--base_dir=/home/yugabyte/yb_data --background=false

docker run -d --name ybnode2 --hostname ybnode2 --net yb-network \

-p 15434:15433 -p 7002:7000 -p 9002:9000 -p 5434:5433 \

-v ~/yb_docker_data/node2:/home/yugabyte/yb_data --restart unless-stopped \

yugabytedb/yugabyte:2025.2.3.0-b149 \

bin/yugabyted start --join=ybnode1 \

--base_dir=/home/yugabyte/yb_data --background=false

docker run -d --name ybnode3 --hostname ybnode3 --net yb-network \

-p 15435:15433 -p 7003:7000 -p 9003:9000 -p 5435:5433 \

-v ~/yb_docker_data/node3:/home/yugabyte/yb_data --restart unless-stopped \

yugabytedb/yugabyte:2025.2.3.0-b149 \

bin/yugabyted start --join=ybnode1 \

--base_dir=/home/yugabyte/yb_data --background=false

Navigate to the YugabyteDB UI to confirm that the database is up and running, at http://127.0.0.1:15433.

Set up the application

Download the application and provide settings specific to your deployment:

-

Clone the repository.

git clone https://github.com/YugabyteDB-Samples/ollama-news-archive.git -

Install the application dependencies.

cd ollama-news-archive git lfs fetch --all npm install cd backend/ && npm install cd ../news-app-ui/ && npm install -

Configure the database connection parameters in

{project_directory/backend/index.js}.

Get started with Ollama

Ollama provides installers for a variety of platforms, and the Ollama models library provides numerous models for a variety of use cases. This sample application uses nomic-embed-text to generate text embeddings. Unlike some models, such as Llama3, which need to be run using the Ollama CLI, embeddings can be generated by supplying the desired embedding model in a REST endpoint.

-

Pull the model using the Ollama CLI.

ollama pull nomic-embed-text:latest -

With Ollama up and running on your machine, run the following command to verify the installation:

curl http://localhost:11434/api/embeddings -d '{ "model": "nomic-embed-text", "prompt": "goalkeeper" }'

The following output is generated, providing a 768-dimensional embedding that can be stored in the database and used in similarity searches:

{"embedding":[-0.6447112560272217,0.7907757759094238,-5.213506698608398,-0.3068113327026367,1.0435500144958496,-1.005386233329773,0.09141742438077927,0.4835842549800873,-1.3404604196548462,-0.2027662694454193,-1.247795581817627,1.249923586845398,1.9664828777313232,-0.4091946482658386,0.3923419713973999,...]}

Load the schema and seed data

This application requires a database table with information about news stories. This schema includes a news_stories table.

-

Copy the schema to the first node's Docker container as follows:

docker cp {project_dir}/database/schema.sql ybnode1:/home/db_schema.sql -

Copy the seed data file to the Docker container as follows:

Because it's an LFS file, you'll need to copy the original file from the

.gitfolder.docker cp {project_dir}/.git/lfs/objects/21/bb/21bbebed1d66c3cad2100ceeee82ac0034dfb806b52043fab7b64b79940d5863 ybnode1:/home/db_data.csv -

Execute the SQL files against the database:

docker exec -it ybnode1 bin/ysqlsh -h ybnode1 -f /home/db_schema.sql docker exec -it ybnode1 bin/ysqlsh -h ybnode1 -c "\COPY news_stories(link,headline,category,short_description,authors,date,embeddings) from '/home/db_data.csv' DELIMITER ',' CSV HEADER;"

Start the application

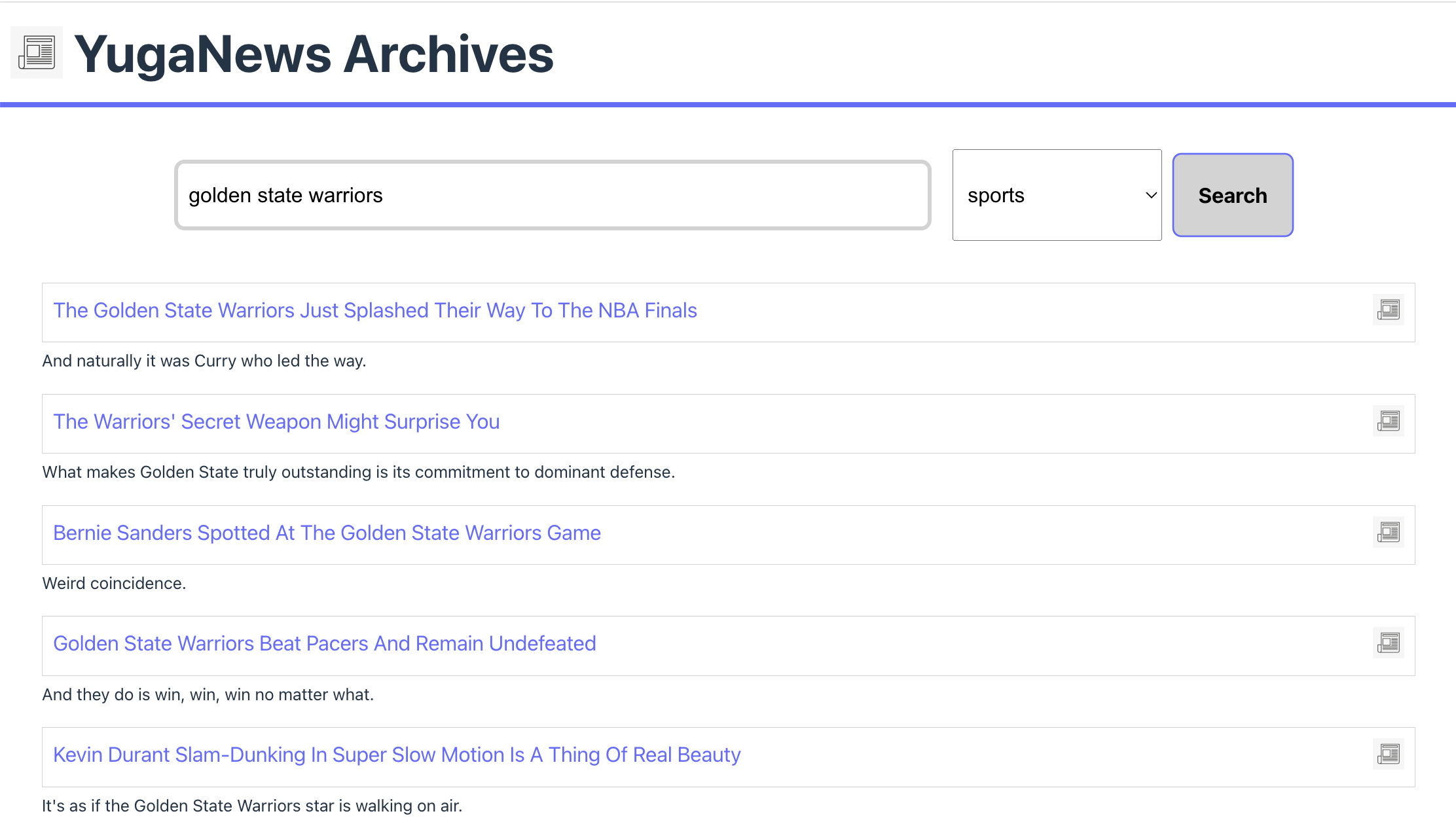

This Node.js application uses a locally-running LLM to produce text embeddings. It takes an input in natural language, as well as a news category, and returns a response from YugabyteDB. By converting text to embeddings, a similarity search is executed using pgvector.

-

Start the API server:

node {project_dir}/backend/index.jsServer running at http://localhost:3000/ -

Query the

/searchendpoint with a relevant prompt and category. For instance:curl "http://localhost:3000/api/search?q=olympic%20gold%20medal&category=SPORTS"{ "data": [ { "headline": "17-Year-Old Snowboarder Wins United States' First Gold Medal In Pyeongchang", "short_description": ""I haven't had time for it to sink in yet,\" Redmond Gerard said following his victory.", "link": "https://www.huffingtonpost.com/entry/red-gerard-gold-olympics_us_5a7fac94e4b0c6726e141850" }, { "headline": "Brazil Finally Wins Olympic Soccer Gold And Everybody Is In Tears", "short_description": "Neymar cried. And then so did everyone else.", "link": "https://www.huffingtonpost.com/entry/brazil-tears-olympic-soccer-rio-2016_us_57b960b4e4b00d9c3a180858" }, { "headline": "Simone Manuel And Simone Biles Pose For Ultimate Olympic Selfie", "short_description": "The gold medalists are feeling the love.", "link": "https://www.huffingtonpost.com/entry/simone-biles-simone-manuel-selfie_us_57ae111de4b069e7e5052acf" }, { "headline": "United States Wins 1,000th Olympic Gold Medal", "short_description": "That's a lot of victories.", "link": "https://www.huffingtonpost.com/entry/united-states-win-womens-4x100-medley-gold_us_57afd40fe4b007c36e4f0746" }, { "headline": "German Team Doctor Recommends Olympians Drink A Beer After Competing", "short_description": "The country currently has 10 gold medals, the second-most of any nation.", "link": "https://www.huffingtonpost.com/entry/german-team-doctor-recommends-olympians-drink-a-beer-after-competing_us_5a8b8b20e4b09fc01e02a355" } ] } -

Run the UI and visit http://localhost:5173 to search the news archives.

cd news-app-ui npm run devVITE ready in 138 ms Local: http://localhost:5173/

Review the application

The Node.js application relies on the nomic-embed-text model, running in Ollama, to generate text embeddings for a user input. These embeddings are then used to query YugabyteDB using similarity search to generate a response.

# index.js

const express = require("express");

const ollama = require("ollama"); // Ollama Node.js client

const { Pool } = require("@yugabytedb/pg"); // the YugabyteDB Smart Driver for Node.js

...

// /api/search endpoint

app.get("/api/search", async (req, res) => {

const query = req.query.q;

const category = req.query.category;

if (!query) {

return res.status(400).json({ error: "Query parameter is required" });

}

try {

// Generate text embeddings using Ollama API

const data = {

model: "nomic-embed-text",

prompt: query,

};

const resp = await ollama.default.embeddings(data);

const embeddings = `[${resp.embedding}]`;

const results = await pool.query(

"SELECT headline, short_description, link from news_stories where category = $2 ORDER BY embeddings <=> $1 LIMIT 5",

[embeddings, category]

);

res.json({ data: results.rows });

} catch (error) {

console.error(

"Error generating embeddings or saving to the database:",

error

);

res.status(500).json({ error: "Internal server error" });

}

});

...

The /api/search endpoint specifies the embedding model and prompt to be sent to Ollama to generate a vector representation. This is done using the Ollama JavaScript library. The response is then used to execute a cosine similarity search against the dataset stored in YugabyteDB using pgvector. Latency is reduced by pre-filtering by news category, thus reducing the search space.

# backend/schema.sql

CREATE EXTENSION IF NOT EXISTS vector;

CREATE TABLE

news_stories (

id serial primary key,

link text,

headline text,

category varchar(50),

short_description text,

authors text,

date date,

embeddings vector (768)

);

CREATE INDEX NONCONCURRENTLY ON news_stories USING ybhnsw (embeddings vector_cosine_ops);

The search speed is further increased by using vector indexing. YugabyteDB currently supports the Hierarchical Navigable Small World (HNSW) index type. This application uses cosine distance for indexing, as the backend query is using cosine similarity search.

This application is straightforward. Before executing similarity searches, embeddings for each news story must be generated and then stored in the database. Refer to the generate_embeddings.js script for details.

# generate_embeddings.js

const ollama = require("ollama");

const fs = require("fs");

const path = require("path");

const lineReader = require("line-reader");

...

const processJsonlFile = async (filePath) => {

return new Promise((resolve, reject) => {

let newsStories = [];

let linesRead = 0;

lineReader.eachLine(filePath, async (line, last, callback) => {

try {

console.log(linesRead);

const jsonObject = JSON.parse(line);

// Process each JSON object as needed

const data = {

model: "nomic-embed-text",

prompt: `${jsonObject.headline} ${jsonObject.short_description}`,

};

const embeddings = await ollama.default.embeddings(data);

jsonObject.embeddings = `[${embeddings.embedding}]`;

newsStories.push(jsonObject);

linesRead += 1;

if (linesRead === 100 || last === true) {

writeToCSV(newsStories);

linesRead = 0;

newsStories = [];

}

if (last === true) {

return resolve();

}

} catch (error) {

console.error("Error parsing JSON line:", error);

}

callback();

});

});

};

This script reads a CSV file with each line representing a news story. By generating embeddings for a string combining each story's headline and short description fields, we're able to provide enough data for similarity searches. These records are then appended to an output CSV file in batches, to later be coped to the database. It's important to note that similarity searches must be conducted using the same embedding model, so this process would need to be repeated if the desired model should change in the future.

Wrap-up

Ollama allows you to use LLMs locally or on-premises, with a wide array of models for different use cases.

For more information about Ollama, see the Ollama documentation.

To learn more on integrating LLMs with YugabyteDB, check out the LangChain and OpenAI tutorial.